A lot of companies are approaching AI visibility in fragments.

They publish an article here, add an FAQ there, check a few prompts, react to a strange answer, and hope the whole thing adds up.

Usually, it does not.

That is because AI visibility is not one tactic. It is a system.

If you want your brand to show up accurately and consistently in AI-assisted discovery, you need more than scattered content. You need an operating system.

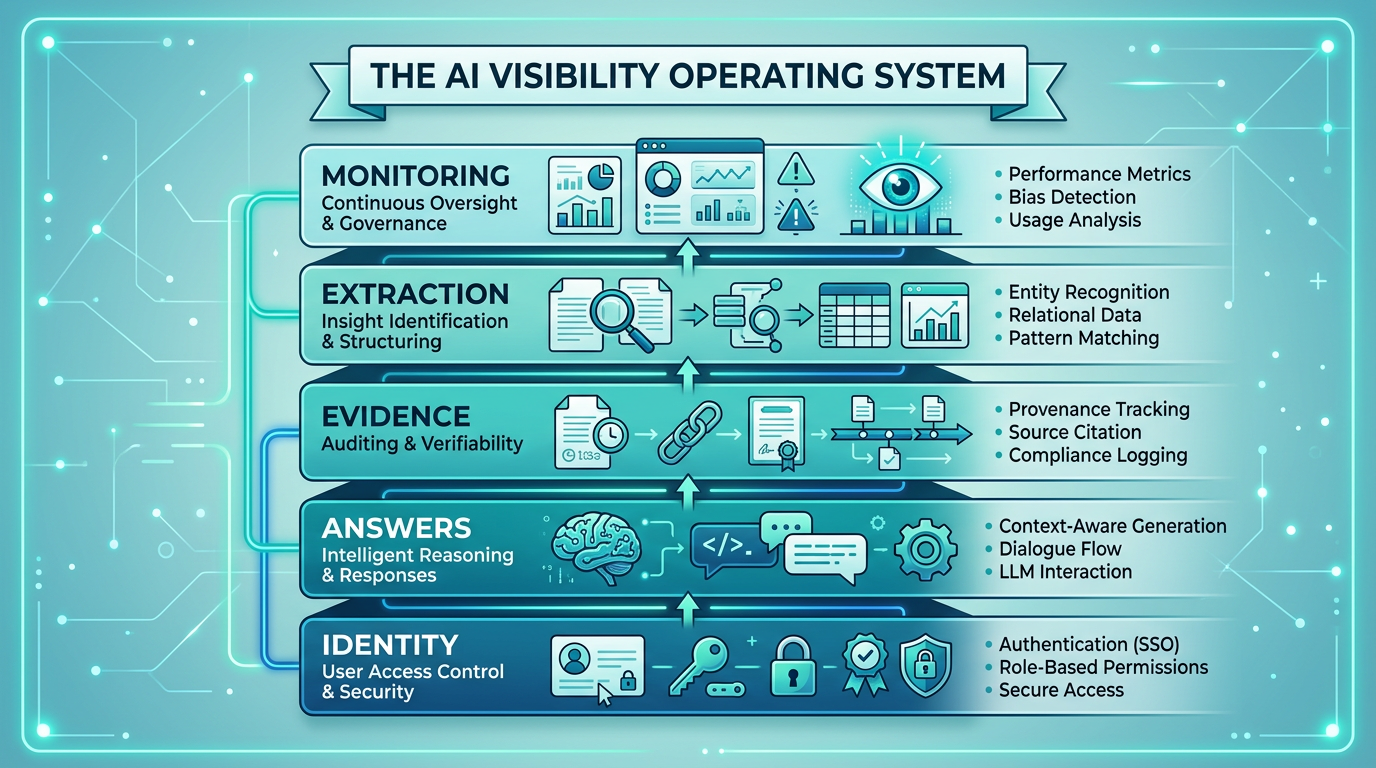

The AI Visibility Operating System is a practical way to think about that system.

It has five layers:

- Identity

- Answers

- Evidence

- Extraction

- Monitoring

Each layer solves a different problem. Together, they reduce ambiguity and improve the odds that AI systems can find, understand, and repeat your brand correctly.

Why an operating system matters

AI visibility problems often look like output problems.

- We were not mentioned

- The answer was vague

- A competitor showed up instead

- The description was partly wrong

But output problems usually begin upstream. See AI visibility starts before recommendation for the earlier steps—discovery, access, interpretation, classification, extraction.

Maybe the system could not clearly classify the business. Maybe the right answers were missing. Maybe the evidence was weak. Maybe the page was hard to extract from. Maybe no one noticed the drift until it had already spread.

That is why a system matters.

Instead of reacting to one answer at a time, you build the underlying layers that make stronger answers more likely over time.

Not guaranteed. Just better supported.

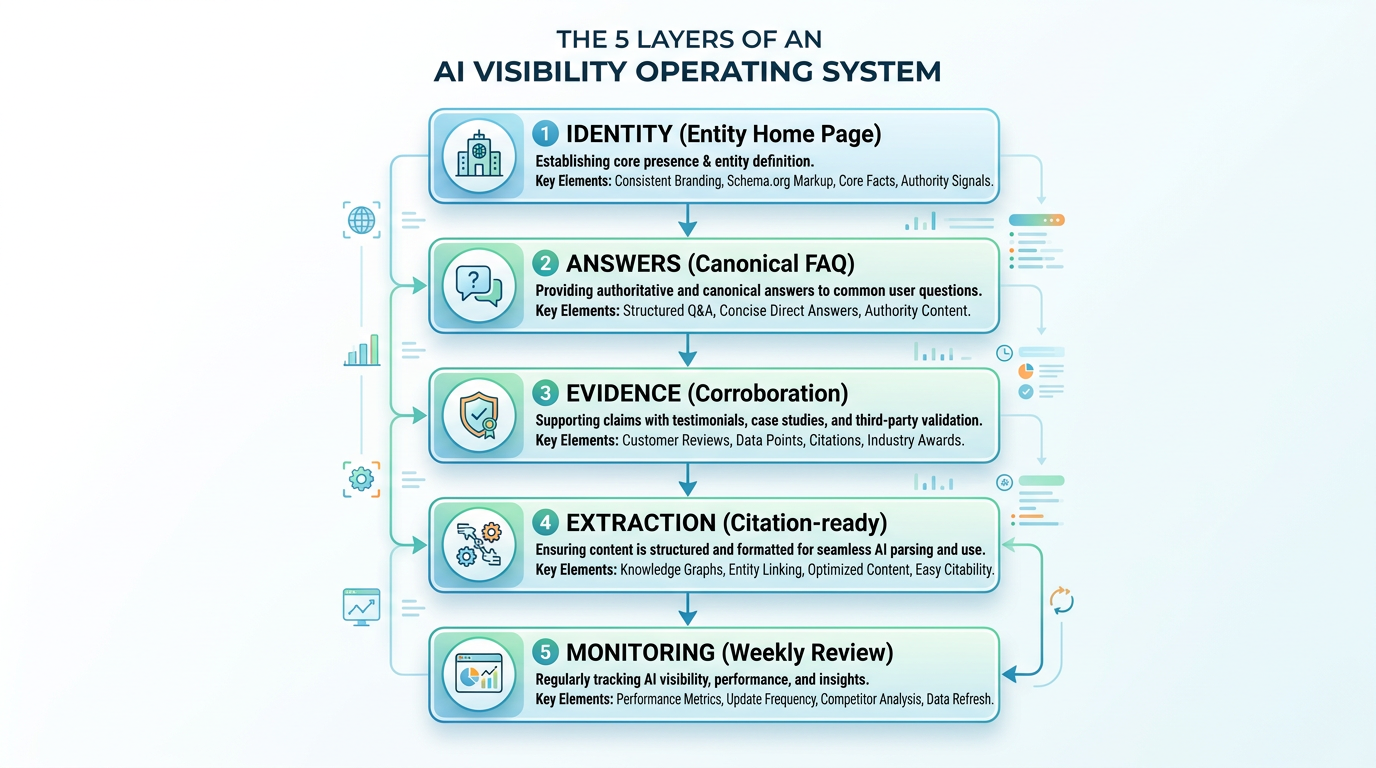

Layer 1: Identity

This layer answers the most basic question:

What is this business, exactly?

If that is unclear, everything after it gets weaker.

The Identity layer includes:

- an Entity Home Page

- clear category language

- audience definition

- geographic scope, when relevant

- explicit "what we are not" statements

This is where you reduce classification problems.

A strong Identity layer helps AI systems understand:

- what the company is

- who it serves

- what bucket it belongs in

- what it should not be confused with

Without that, the system starts inferring from scattered clues.

Layer 2: Answers

Once identity is clear, the next question is:

Can the system find direct answers to the evaluation questions people care about?

This is the Answers layer.

It includes:

- a Canonical FAQ

- comparison pages

- "best for" and "not for" pages

- pricing clarification

- trust and legitimacy answers

- limitations and constraints

This layer exists because people do not just ask, "What is this?"

They ask:

- Who is it for?

- How is it different?

- Is it legitimate?

- What does it not do?

- How does pricing work?

- What are the limitations?

If your site does not answer those clearly, the system may guess.

Layer 3: Evidence

The third question is:

Is there enough corroboration for the system to trust and repeat the core identity and claims?

This is the Evidence layer.

It includes:

- aligned website pages

- consistent public profiles

- directory listings

- partner references

- third-party mentions

- original data or structured observations

This is where your truth stops being a single page and starts becoming a network of reinforcing signals. This is the corroboration layer in the Truth-Hardening Stack.

The goal is not volume. The goal is consistency.

A strong Evidence layer makes it easier for AI systems to find agreement across sources instead of conflict.

Layer 4: Extraction

Now assume the system found the page and the right answers exist.

The next question is:

Can the system extract the right information quickly and cleanly?

This is the Extraction layer.

It includes:

- top-of-page definitions

- direct answers near the top

- question-based headings

- explicit negatives

- concise answer blocks

- summary bullets

- citation-ready structure

See the Citation-Ready Page Blueprint for page structure templates.

This matters because a buried truth is weaker than an accessible truth. If your key definitions, comparisons, and boundaries are hard to pull from the page, the answer layer gets weaker even when the right information technically exists.

Extraction is where clarity becomes usable.

Layer 5: Monitoring

Finally, even strong systems drift over time.

That is why the fifth layer is Monitoring.

This layer includes:

- prompt clusters

- inclusion checks

- accuracy checks

- stability checks

- competitor tracking

- weekly review habits

- action logs

See The Weekly AI Visibility Review for a repeatable Friday workflow.

Monitoring answers the question:

Is the system still working the way we think it is?

Without this layer, teams tend to rely on random screenshots, isolated prompt checks, and emotional reactions.

With it, AI visibility becomes something you can manage calmly and repeatedly.

How the layers work together

The most important thing to understand is this:

These are not separate tactics. They are connected.

- Identity improves classification

- Answers reduce guesswork

- Evidence supports trust

- Extraction improves usability

- Monitoring catches drift

If one layer is weak, the whole system gets weaker.

For example:

- strong content with weak identity can still be misclassified

- strong identity with weak answers can still leave major gaps

- strong answers with weak extraction can still underperform

- strong structure with no monitoring can still drift unnoticed

That is why this works better as an operating system than a checklist.

A simple diagnostic

If you want to know where your system is weakest, ask these five questions:

Identity — Would a new visitor know exactly what this business is within the first few lines?

Answers — Does the site directly answer the questions real buyers, and AI systems, are likely to ask?

Evidence — Do multiple public sources align around the same core truth?

Extraction — Can the system pull the key facts quickly without reading the whole site?

Monitoring — Do you have a repeatable review process for inclusion, accuracy, and stability?

Wherever the answer is weakest, that is your next build priority.

What to fix first

If Identity is weak — Start with the Entity Home Page.

If Answers are weak — Build the Canonical FAQ and comparison pages.

If Evidence is weak — Align profiles, listings, and public references. See the Truth-Hardening Stack for the corroboration layer.

If Extraction is weak — Rewrite your top pages for directness and structure. Use the Citation-Ready Blueprint.

If Monitoring is weak — Create a weekly AI visibility review routine.

Simple beats fancy here.

Why this model matters

The AI visibility conversation often gets reduced to one question:

"How do we get mentioned more?"

That question is too narrow.

A better question is:

"How do we build a system that makes accurate inclusion easier over time?"

That is what the AI Visibility Operating System is for.

It gives you a way to think beyond random tactics and start building a repeatable foundation.

Bottom line

AI visibility is not one trick, one page, or one prompt.

It is a system made up of five connected layers:

- Identity

- Answers

- Evidence

- Extraction

- Monitoring

If you strengthen those five layers consistently, you make it easier for AI systems to find, understand, and repeat your business correctly.

That is a much better long-term strategy than chasing one answer at a time.

See How It Works for the audit flow and our methodology for how we measure AI visibility.