Can AI confidently verify your business?

Get your AI Readiness Score in 60 seconds. See the signal gaps that cause omission, confusion, or outdated summaries.

AI systems rely on public signals; weak signals increase the risk of omission or misrepresentation.

Readiness evaluates clarity and trust signals in isolation. Competitive visibility is a separate report that introduces competitors.

Get your free AI Readiness Score and see:

- • Your signal gaps (clarity, evidence, coverage, structure)

- • What AI is likely to infer from your public signals

- • The top 3 things to fix right now

Evidence-backed diagnostics where possible. No guarantees of inclusion or rankings. Readiness scoring is signal-based. Competition Runs are available after signup and are reported separately from Readiness.

How It Works

Identify your entity

URL + location confirmation

Analyze public signals

Clarity, evidence, coverage, structure

Get your score + top fixes

Instant results & insights

Readiness scoring does not rely on live AI answers. Competitive reports are separate.

Right now, your signals may be too weak.

AI systems rely on public signals; weak or inconsistent signals increase the risk of omission, confusion, or outdated summaries. This free Readiness audit shows you where you're strong, where you're missing, and what to fix first.

When signals are weak:

- • Risk of omission or misrepresentation

- • Your differentiators may not surface

- • AI may repeat outdated or incomplete info

- • You miss invisible opportunities

What You'll Get

AI Readiness Score (0–100)

Score reflects entity clarity + evidence strength + content coverage + structured signals (with stability adjustment).

What AI is likely to infer

Based on your public signals — clarity, evidence, coverage, structure.

Top 3 fixes to improve readiness

Prioritized actions to strengthen your signals.

Why This Matters Now

AI systems increasingly rely on public signals to interpret brands. Weak signals increase the risk of omission or misrepresentation. Don't guess — see your Readiness and fix the gaps.

Quick FAQ

- What is AI Presence?

- A diagnostic platform that helps organizations understand how AI systems interpret and recommend them. It evaluates clarity, trust, and corroboration signals that AI systems rely on.

- Are AI Presence scores rankings?

- No. Scores are not rankings, probabilities, or predictions. They are relative indicators for prioritization and planning.

- Can AI Presence guarantee inclusion in AI answers?

- No. We make no guarantees regarding inclusion, citation, recommendation, or visibility in AI-generated outputs.

- Why are there two scores?

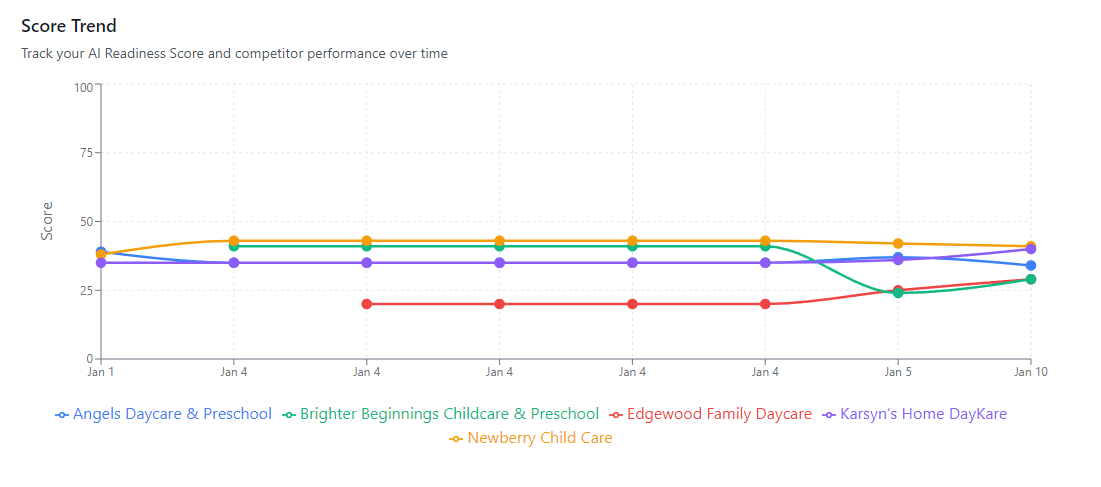

- AI Readiness evaluates you in isolation. Competitive AI Visibility evaluates relative positioning when competitors are introduced. Both are reported separately.

Get your free AI Readiness Score and see the signal gaps that matter.

Evidence-backed diagnostics where possible. No guarantees of inclusion or rankings. Readiness scoring is signal-based. Competition Runs are available after signup and are reported separately from Readiness.